Bishop Hill

Bishop Hill Commenting

Sep 7, 2011

Sep 7, 2011  Blogs

Blogs Once again, there is a new fix (?) for the commenting issues on the blog.

Can you let me know how it goes please.

Good luck!

Books

Click images for more details

A few sites I've stumbled across recently....

Bishop Hill

Bishop Hill  Sep 7, 2011

Sep 7, 2011  Blogs

Blogs Once again, there is a new fix (?) for the commenting issues on the blog.

Can you let me know how it goes please.

Good luck!

Bishop Hill

Bishop Hill  Sep 7, 2011

Sep 7, 2011  FOI

FOI This is a guest post by David Holland.

Two recent Decision Notices appear to uphold the idea, popular with some climate scientists, that pre-emptive deletion of information gets round the Environmental Information Regulations. The first relates to University of East Anglia and the email to which Phil Jones attached the CRUTEM data he sent to Georgia Tech. The second relates to the University of Edinburgh and the Russell Review correspondence, all of which was deleted by the University very soon after the Review Report was released. This was well before we learnt all about Geoffrey Boulton's editing of my evidence submission, and Graham Stringer saying the Review was beyond parody.

Bishop Hill

Bishop Hill  Sep 7, 2011

Sep 7, 2011  BBC

BBC  Climate: CRU

Climate: CRU When the BBC published its review of science, Research Fortnight published a leader criticising Steve Jones' report, saying it was "a victory for the forces of public relations". Now Fiona Fox, of Science Media Centre fame, has responded.

The leader also assumes that research press officers do little but promote beautifully crafted, peer-reviewed studies on new breakthroughs. But what about the press officers at the University of Oxford who spent years persuading reluctant university authorities to open up their animal research facilities to the media, despite a history of violent attacks from animal rights extremists? What about the Imperial College London press officer who spent her weekends and evenings supporting David Nutt after he was sacked as drugs adviser by the Home Secretary? What about the University of East Anglia press officers who managed the fallout from ‘climate-gate’ for over a year while the world sat in judgment on their media-relations strategy?

Can she really not know that the PR campaign at UEA was run by the Outside Organisation?

Bishop Hill

Bishop Hill  Sep 7, 2011

Sep 7, 2011  Climate: HSI

Climate: HSI  Climate: MWP

Climate: MWP  I'm currently reading Raymond Bradley's new book Global Warming and Political Intimidation, which is very interesting. The sense I get from the book is of a minor civil servant trying to justify some almighty great shambles over which he has presided, which in a way is what the Hockey Stick story is about.

I'm currently reading Raymond Bradley's new book Global Warming and Political Intimidation, which is very interesting. The sense I get from the book is of a minor civil servant trying to justify some almighty great shambles over which he has presided, which in a way is what the Hockey Stick story is about.

It's a very political work, with Bradley apparently seeing pretty much everything through a political lens: in several places in the book we are presented with stories of valiant Democrats defending honest scientists from wicked Republicans. We have, in essence, a minor civil servant who thinks he's living in a fairy tale and trying to justify himself to the world.

Because of this political focus, there is remarkably little discussion of the science and although there is a chapter on the Hockey Stick, there is no mention of bristlecones or principal components analysis. (And before you ask, no, he doesn't mention the Hockey Stick Illusion either). However, he does make an attempt to defend the science of the Hockey Stick, and my attention is going to be focused there. There's quite a lot to say on this subject, however, so I'm going to break the analysis down into separate posts.

Bishop Hill

Bishop Hill  Sep 7, 2011

Sep 7, 2011  Climate: solar

Climate: solar Anne Jolis has written an very nice, level-headed review of Svensmark and the CLOUD experiment.

But a few physicists weren't worrying about Al Gore in the 1990s. They were theorizing about another possible factor in climate change: charged subatomic particles from outer space, or "cosmic rays," whose atmospheric levels appear to rise and fall with the weakness or strength of solar winds that deflect them from the earth. These shifts might significantly impact the type and quantity of clouds covering the earth, providing a clue to one of the least-understood but most important questions about climate. Heavenly bodies might be driving long-term weather trends.

The theory has now moved from the corners of climate skepticism to the center of the physical-science universe: CERN, also known as the European Organization for Nuclear Research. At the Franco-Swiss home of the world's most powerful particle accelerator, scientists have been shooting simulated cosmic rays into a cloud chamber to isolate and measure their contribution to cloud formation. CERN's researchers reported last month that in the conditions they've observed so far, these rays appear to be enhancing the formation rates of pre-cloud seeds by up to a factor of 10. Current climate models do not consider any impact of cosmic rays on clouds.

Bishop Hill

Bishop Hill  Sep 6, 2011

Sep 6, 2011  Climate: Mann

Climate: Mann  FOI

FOI The American Tradition Institute have just revealed that Michael Mann has engaged lawyers to try to intervene in the FOIA case between the institute and the University of Virginia.

Dr. Michael Mann, lead author of the discredited "hockey stick" graph that was once hailed by the UN Intergovernmental Panel on Climate Change as the "smoking gun" of the catastrophic man-made global warming theory, has asked to intervene in American Tradition Institute's Freedom of Information Act lawsuit that seeks certain records produced by Mann and others while he was at the University of Virginia, for the purpose of keeping them hidden from the taxpayer.

Specifically over the weekend ATI's Environmental Law Center received service from two Pennsylvania attorneys who seek the court's permission to argue for Dr. Mann to intervene in ATI's case. The attorneys also filed a motion to stay production of documents still withheld by UVA, which are to be provided to ATI's lawyers in roughly two weeks under a protective order that UVA voluntarily agreed to in May. Dr. Mann's lawyers also desire a hearing in mid-September, in an effort to further delay UVA's scheduled production of records under the order.

Dr. Mann's argument, distilled, is that the court must bend the rules to allow him to block implementation of a transparency law, so as to shield his sensibilities from offense once the taxpayer – on whose dime he subsists – sees the methods he employed to advance the global warming theory and related policies. ATI's Environmental Law Center is not sympathetic.

Bishop Hill

Bishop Hill  Sep 6, 2011

Sep 6, 2011  FOI

FOI Heather Brooke is on form again, with a perceptive piece about civil servants' use of aggressive PR tactics to try to silence critics, with particular reference to UEA.

It seems there is another tactic gaining strength whereby PRs attempt to silence those uttering inconvenient truths 'Scientology-style' by hunting down criticism and aggressively seeking to have it withdrawn.

I wonder if she knows Bob?

Josh

Josh  Sep 6, 2011

Sep 6, 2011  Conservatives

Conservatives  Josh

Josh  Politicians

Politicians

Something a bit different - but then so is trying to get your head round a 'green government' bulldozing green spaces. Inspired by George at the Guardian.

Bishop Hill

Bishop Hill  Sep 6, 2011

Sep 6, 2011  Climate: CRU

Climate: CRU  FOI

FOI UEA has complained to the Guardian about Heather Brooke's article about FOI and universities. They object to her saying that they broke the FOI laws. Heather is surprised by their gall. I don't suppose many readers here are though.

No doubt the complaint goes along the lines of "nobody has been found guilty of anything", which of course is a different question to whether anyone broke the law. There is no doubt that UEA staff broke the FOI laws, but no, nobody has been found guilty of anything.

Bishop Hill

Bishop Hill

Richard Smith agrees that Stirling should release their data.

Bishop Hill

Bishop Hill  Sep 6, 2011

Sep 6, 2011  Energy: costs

Energy: costs Richard Drake has posted an epetition to the gubmint:

Household energy bills are currently projected to increase by 30% - over £300 per annum - by 2020 as a direct result of policies that seek to reduce UK emissions of carbon dioxide. Because of uncertainties in both the science and the politics of climate change, including what other countries will be doing, and the burden such increases put on the poorest and most vulnerable in society, we ask that the increase should be no more than 5% of current energy bills.

You can sign here.

Bishop Hill

Bishop Hill  Sep 6, 2011

Sep 6, 2011  Climate: Models

Climate: Models

Updated on Sep 6, 2011 by

Bishop Hill

Bishop Hill

Thanks to Anthony for forwarding me the Dessler comment on Spencer and Braswell. I'll post the same excerpts as AW has so that readers here can discuss.

Cloud variations and the Earth’s energy budget

A.E. Dessler

Dept. of Atmospheric Sciences

Texas A&M University

College Station, TXAbstract: The question of whether clouds are the cause of surface temperature changes, rather than acting as a feedback in response to those temperature changes, is explored using data obtained between 2000 and 2010. An energy budget calculation shows that the energy trapped by clouds accounts for little of the observed climate variations. And observations of the lagged response of top-of-atmosphere (TOA) energy fluxes to surface temperature variations are not evidence that clouds are causing climate change.

Bishop Hill

Bishop Hill  Sep 6, 2011

Sep 6, 2011  Climate: Surface

Climate: Surface Anthony Watts has an interesting post about the temperature record for England which is getting much warmer than the records for Scotland, Wales, and Northern Ireland. Remarkably, it is even diverging from the Central England Temperature record.

I'm sure readers will want to press Richard B for his thoughts on this, but given that it's not actually his area and given also that he is probably overwhelmed by all the engagement he has been doing here already, I have emailed a press officer at the Met Office I met at the Cambridge Conference. Maybe we can get a comment from someone in the know.

Bishop Hill

Bishop Hill  Sep 5, 2011

Sep 5, 2011  Climate: other

Climate: other  Quotes

Quotes

With a tiny handful of exceptions (Judy, Richard Betts, Hans von Storch, Eduardo Zorita, surely there must be a few more?) the whole of “mainstream” climate science seems to be going into collective meltdown. To ordinary scientists their behaviour just gets more bizarre with every day.

I have worked in all sorts of areas of science, some really quite controversial, and I have never seen this sort of childish throwing of toys out of prams in any other context. I can’t see any solution beyond some proper grown ups getting involved and telling Trenberth and Gleick and friends to sit on the naughty step until they learn how to play nicely.

Jonathan Jones at Climate etc.

Bishop Hill

Bishop Hill  Sep 5, 2011

Sep 5, 2011  Climate: IPCC

Climate: IPCC  Journals

Journals Roy Spencer has penned some further thoughts on the campaign being waged by the Team and he is worried:

We simply cannot compete with a good-ole-boy, group think, circle-the-wagons peer review process which has been rewarded with billions of research dollars to support certain policy outcomes.

It is obvious to many people what is going on behind the scenes. The next IPCC report (AR5) is now in preparation, and there is a bust-gut effort going on to make sure that either (1) no scientific papers get published which could get in the way of the IPCC’s politically-motivated goals, or (2) any critical papers that DO get published are discredited with any and all means available.

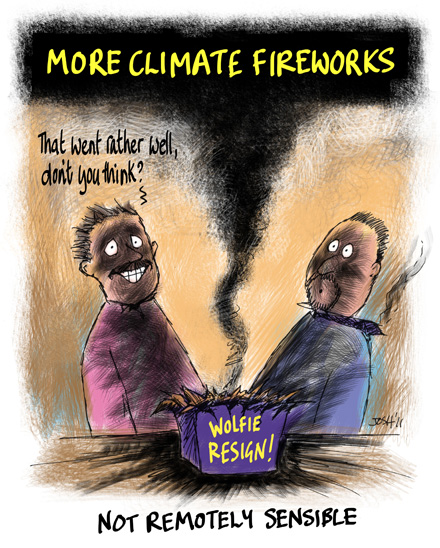

Josh

Josh

It is the story of the week - how Wolfgang Wagner may or may not have been pressurised to resign over the publication of Spencer & Braswell. I wonder how the Team feel now?